Chapter 2 Assessment of hepatic function

Implications for the surgical patient

Preoperative Considerations

In recent years, surgeons have increasingly pushed the limits of hepatic resection for a number of diseases, given the improvements in long-term outcome that can be achieved; a particularly striking example is resection for metastatic colorectal cancer (see Chapters 81A and 90A). Additionally, laparoscopic and nonanatomic resections are being performed with increasing frequency, as excellent oncologic outcome is being reported for both primary and secondary hepatic neoplasms. These liver parenchymal–sparing procedures are often performed in the setting of cirrhosis or treatment with potentially hepatotoxic chemotherapy and therefore require a very precise assessment of both preoperative and predicted postoperative liver function.

Despite a long list of available functional studies, imaging techniques, and markers to assess preoperative liver function (Table 2.1), no single measure or combination of measures has been demonstrated to be more predictive of outcome following resection than clinical assessment by an experienced hepatic surgeon. With improvements in surgical technique, anesthesia support, and ICU care, surgical mortality following hepatic resection has fallen to less than 3% at many institutions. However, death or complications related to liver failure following resection remains a significant cause of overall morbidity and mortality, with 16% to 50% of all mortality the result of liver failure, even in the setting of metastatic colorectal cancer (Nagao et al, 1987; Nagasue et al, 1993; Doci et al, 1995; Nordlinger et al, 1996). The continued search for an ideal measure of liver function before and after resection promises to allow for better selection of patients as well as better selection of operative procedures. This chapter reviews the measures of liver reserve that are currently available, which can broadly be divided into four categories: 1) clinical scoring systems using standard assessment and laboratory values, 2) measurements of hepatic uptake and excretion, 3) measurements of hepatic metabolism and excretion, and 4) measurements of predicted postoperative liver volume.

| Assessment |

Clinical Scoring Systems

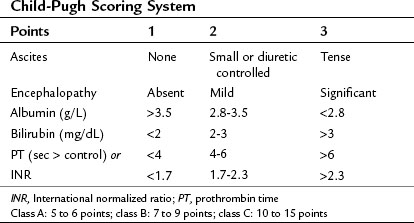

The clinical assessment of hepatic function has evolved from the Child’s system (Child et al, 1964) and its subsequent modification, the Child-Pugh score (Pugh et al, 1973) as shown in Table 2.2. This scoring system was originally developed to predict mortality in patients with portal hypertension after portocaval shunt operations, and it has evolved to become a useful predictor of liver-related mortality in patients with cirrhosis.

Using a combination of clinical assessments and standard laboratory values, the Child-Pugh score is a quick assessment that can be easily performed on a preoperative patient (see Chapter 70B). The laboratory values included in the scoring system include total bilirubin, serum albumin, and prothrombin time (PT)/international normalized ratio (INR) levels. Bilirubin alone is neither sensitive nor specific for intrinsic liver disease but serves as an indirect measure of the ability of the liver to take up and conjugate bilirubin and to eventually secrete it. Other causes of bilirubin elevations include biliary obstruction, primary biliary cirrhosis, sclerosing cholangitis, and hemolytic conditions. Serum albumin level is a measure of the liver’s synthetic ability, as albumin is produced exclusively in the liver. Its half-life is approximately 20 days; therefore it is not sensitive in detecting acute hepatic decompensation. Additionally, protein-losing enteropathies, malnutrition, renal disease, and burns can lead to hypoalbuminemia. PT/INR is also an important measure of liver synthetic function, because the liver is the major site for synthesis of blood clotting factors. Clotting factors V, VII, and prothrombin are critical components of the intrinsic clotting cascade, which the PT directly measures. The half-life of factor VII is around 2 hours; therefore INR is a much more dynamic measure of liver synthetic function than serum albumin levels. Problems with vitamin K absorption and intravascular consumption of clotting factors can lead to elevations in PT/INR as well.

Two additional components of the Child-Pugh classification include a subjective assessment of ascites and encephalopathy. Patients are divided into three classes based on points given for each category. In patients with cirrhosis, the 1-year mortality related to liver failure is minimal (<5%) for Child-Pugh class A patients, compared with 20% and 55% for class B and C patients, respectively (Conn, 1981; Table 2.3).

When used for risk stratification prior to hepatic resection, class B and C patients clearly have a higher mortality rate and lower survival rate compared with class A patients (Franco et al, 1990; Nonami et al, 1997). Indeed, very few patients with Child-Pugh class B cirrhosis would be considered candidates for even limited hepatic resection, and none would tolerate a major hepatectomy; class C cirrhosis is an absolute contraindication for even the most minor intervention. The major limitation of the Child-Pugh system is that it is not considered applicable to noncirrhotic patients or to those with other conditions, such as steatosis (see Chapters 65 and 87). Furthermore, there is variability among class A patients in terms of their ability to tolerate a major hepatectomy, but a few good-risk class B patients may also be candidates. Therefore surgeons are faced with using their best judgment to determine “poor-risk” class A patients and exclude or alter plans for resection and to identify “good-risk” class B patients.

Within the class A category, an additional means of determining risk of postoperative hepatic decompensation is to assess for subtle signs of portal hypertension prior to resection. In the absence of splenomegaly or varices on imaging, which are rarely present in such patients, the platelet count may be used as a surrogate marker of subclinical portal hypertension. In a cirrhotic patient with otherwise good hepatic function, thrombocytopenia is highly suggestive of significant hypersplenism and portal hypertension severe enough to contraindicate resection in most cases. A direct way to assess for this is measurement of portal venous wedge pressure (Bruix et al, 1996), which can effectively stratify class A patients into high- and low-risk groups; however, this test requires expertise that is not widely available and is not routinely used.

The model for end-stage liver disease (MELD) is a clinical scoring system currently used to predict survival of patients with end-stage liver disease awaiting liver transplantation (Kamath et al, 2001; Wiesner et al, 2001). The MELD score considers bilirubin, INR, and creatinine to assess kidney function. The MELD score is derived from a complex calculation as follows:

In cirrhotic patients, the MELD score predicts the development of postoperative liver failure following hepatectomy for hepatocellular carcinoma (HCC), with a cutoff score greater than 11 predictive of worse outcome (Freeman et al, 2002; Teh et al, 2005; Cucchetti et al, 2006; see Chapter 70B, Chapter 90A, Chapter 90F, Chapter 97A ).

Both the MELD and Child-Pugh scores are meant to assess cirrhotic patients in particular and are considered unreliable in noncirrhotic patients (see Chapters 65 and 87). Furthermore, the subjective assessment of ascites and encephalopathy requires considerable input from the hepatic surgeon with regard to the suitability for resection. It is likely that it is this input that allows the experienced surgeon to impart clinical judgment to selected patients for resection, and it is why the Child-Pugh score alone has been demonstrated to be equivalent to most of the functional dynamic liver reserve studies published (Albers et al, 1989).

Dynamic Liver Tests

Measurement of Hepatic Uptake and Elimination

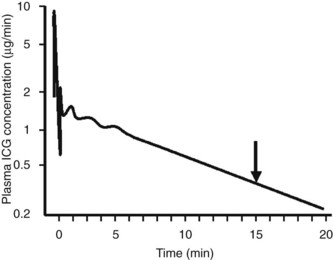

Indocyanine green (ICG) is an anionic organic dye that is protein bound and selectively taken up by hepatocytes and excreted unchanged via the bile. Plasma extraction of ICG by the liver is an active process that can be saturated with high doses of ICG (Faybik et al, 2006). Therefore maximal removal of ICG can be measured, and it reflects the uptake and excretional capabilities of the liver, which can be extrapolated to reflect hepatocyte blood flow and functional hepatocyte mass (Caesar et al, 1961).

Assessment of ICG clearance typically involves measuring serum levels at several points following intravenous administration (see Table 2.3). Clearance at 15 minutes is the most commonly used measurement; ICG retention values above 10% to 15% at 15 minutes are considered abnormal and are used as a cutoff to identify patients at high risk for liver failure following liver resection (Lam et al, 1999; Fig. 2.1). Poon and colleagues have recently recommended a safe limit as high as 20% in Child-Pugh class A patients (Poon et al, 2004). Pulse infrared spectroscopy can also be used detect real-time, continuous blood levels, similar to pulse oximetry, without the need to obtain serum measurements (Okochi et al, 2002).

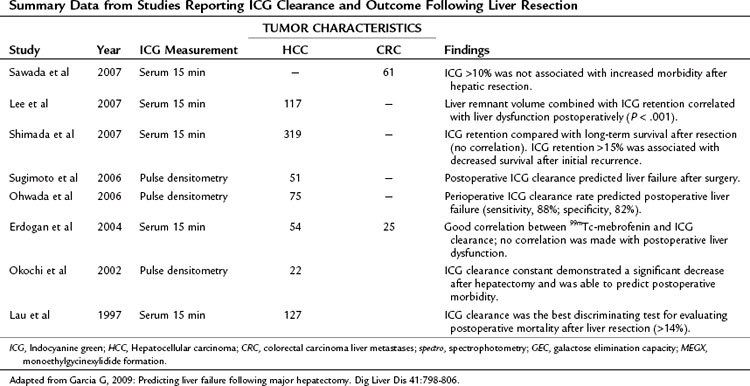

ICG elimination is by far the most widely used and published functional assessment of liver reserve worldwide (Yumoto et al, 1994; Kwon et al, 1997; Fujioka et al, 1999; Tanaka et al, 1999; Wakabayashi et al, 2002; Kokudo et al, 2003; Satoh et al, 2003; Bennink et al, 2004). Imamura and colleagues (2003) reported a 0% mortality rate in a series of 1056 hepatic resections for HCC and cirrhosis using an ICG-15 cutoff of less than 10% for a safe extended resection, 10% to 20% for hemihepatectomy, 20% to 30% for segmentectomy, and only enucleation for an ICG-15 greater than 40%. Noguchi and colleagues (1990) used the ICG maximal removal rate of 0.8 µg/kg cm3 as a cutoff for safe resection, and Ohwada and collagues (2006) found real-time monitoring of ICG beneficial in evaluating hepatic reserve before, during, and after hepatic resection. ICG clearance has also been useful in predicting short-term prognosis in liver transplant patients (Clements et al, 1988; Lamesch et al, 1990; Oellerich et al, 1991; Yamanaka et al, 1992; Jalan et al, 1994; Tsubono et al, 1996; Igea et al, 1999; Plevris et al, 1999; Jochum et al, 2006). Tsubono and colleagues (1996) noted that a day 1 ICG elimination constant was a better predictor of graft outcome than any other conventional liver function test, and Clements and colleagues (1988) found ICG clearance to be impaired with graft rejection, but it improved as rejection resolved.

Unfortunately, the findings for ICG have not been consistent, with many authors finding a lack of correlation between ICG levels and successful liver resection (Wakabayashi et al, 1997; Lam et al, 1999). This has been particularly true in the setting of PVE, and this variability is thought to be the result of the dependence of ICG clearance on total hepatic blood flow, with regional variations markedly altering retention values (Cherrick et al, 1960). This has led a number of surgeons to suggest that no quantitative liver function tests provide a clear advantage beyond Child-Pugh score for predicting outcome following resection (Albers et al, 1989; Bennett et al, 2005). In a recent study of 111 patients, Child-Pugh score better predicted postoperative morbidity secondary to transient liver failure compared with ICG clearance tests, and this lack of superiority of ICG clearance compared with Child-Pugh score has been confirmed by others (Albers et al, 1989; Merkel et al, 1989; Kokudo et al, 2002). Bennett and Blumgart noted significant overlap in ICG parameters between patients with cirrhosis and healthy controls, and in their institutional experience of 1800 liver resections, there were only six deaths without the reliance on any dynamic functional tests (Jarnagin et al, 2002; Bennett et al, 2005).

Hepatobiliary Scintigraphy and Single-Photon Emission Computed Tomography

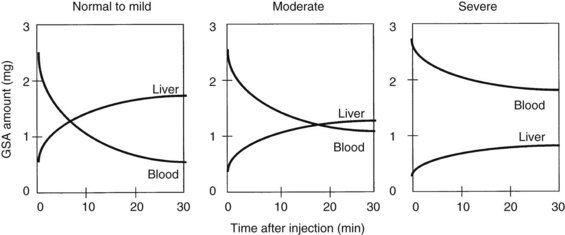

Radionuclide scintigraphy of the liver can provide information far beyond basic liver anatomy, allowing for assessment of functioning hepatocyte mass as well as hepatic hemodynamics. Uptake of liver-specific radionuclides can be used to quantify overall hepatic function, and calculations of the relative contributions to perfusion by the portal vein and hepatic artery can be used to estimate the presence of liver metastases and cirrhosis. Beyond the classic sulfur and gold colloid scans and liver uptake and excretion imaging of aminodiacetic acid derivatives (HIDA, DISIDA), more recent synthetic, radiolabeled asialoglycoproteins have been developed that are taken up by hepatocyte-specific receptors through an active transport process (Kudo et al, 1990). Reduction in receptor numbers is seen in patients with chronic liver disease; because receptors are absent from the surface of hepatocellular carcinoma cells, scintigraphy can be used to provide a functional assessment of the liver through its ability to take up and clear these compounds. One such asialoglycoprotein is technetium-99m-galactosyl human serum albumin (99mTc-GSA). Patients undergoing a Tc-GSA scan receive a bolus injection of 185 MBq of 99mTc-GSA, and a dynamic scintigraph is obtained with gamma cameras located over the heart and liver. Typical curves are shown in Figure 2.2 (Ha-Kawa et al, 1997).

Kwon and colleagues (1995) were among the first to use 99mTc-GSA to evaluate preoperative and postoperative hepatic reserve, finding that GSA uptake was similar to ICG-15 clearance in terms of its ability to predict liver function. Fujioka and colleagues (1999) assessed 99mTc-GSA uptake at 15 minutes and found it to correlate with postoperative complications, hepatic regeneration, and ICG-15 clearance.

Single-photon emission computed tomography (SPECT) has also been used to assess liver function prior to surgery. Hwang and colleagues (1999) used 99mTc-GSA with SPECT in 114 patients, 55 of which underwent hepatectomy. A good correlation was found between SPECT results and ICG clearance and between predicted versus actual 99mTc-GSA clearance postoperatively. Five patients with postoperative complications as a result of hepatic insufficiency had significantly lower 99mTc-GSA clearance than those without complications. Satoh and colleagues (2003) developed a predictive residual index and found that a cutoff of greater than 0.38 predicted postoperative hepatic failure (see Chapter 15).

Scintigraphic imaging of the liver has the added advantage of providing an assessment of liver volume, and some authors suggest the volumetric analysis is better than that obtained with CT volumetry, because it measures only the functional volume; stromal and fibrous tissues are excluded because of the lack of the asialoglycoprotein receptors (Kwon et al, 2006). Scintigraphy may be a more accurate assessment of the regenerating liver than CT volumetry, given the lag time in cross-sectional imaging changes that are not seen with 99mTc-GSA clearance (Kwon et al, 2001; Kokudo et al, 2002). Age-related differences seen in 99mTc-GSA scintigraphic clearance in patients with similar CT liver volumes also seem to suggest a more accurate functional assessment of the liver (Wakabayashi et al, 2002), and 99mTc-GSA scintigraphy has also been used to assess liver volume following PVE (Hirai et al, 2003; Nanashima et al, 2006). Hirai and colleagues looked at liver volume and function using CT and 99mTc-GSA and found a rapid increase in uptake during the first week following PVE, but little thereafter. These changes were more pronounced on scintigraphy when compared with changes seen on CT alone, and patients with hepatic insufficiency postoperatively had poorer 99mTc-GSA uptake. Nanashima and colleagues (2006) had similar findings, demonstrating that 99mTc-GSA scintigraphy was better than CT alone for demonstrating functional liver volume following PVE.

In addition, 99mTc-GSA scintigraphy has also been investigated for use in the assessment of graft parenchymal function following liver transplantation (Kita et al, 1998; Sakahara et al, 1999; Hori et al, 2006). Most recently, Hori and colleagues (2006) found good correlation between 99mTc-GSA and ICG clearance following liver transplantation; however, the advantages of ICG over scintigraphy was that ICG clearance is portable, quick, and can be easily repeated without the need for a radioactive tracer. Other disadvantages of 99mTc-GSA scintigraphy is its complex tracer kinetics in terms of uptake and distribution. Finally, 99mTc-GSA is currently only available for clinical use in Japan; it is not available in the United States or in Europe.

Measurements of Hepatic Metabolism and Elimination

Lidocaine Metabolism

Unlike ICG, lidocaine is metabolized by hepatocytes and converted to monoethylglycinexylide (MEGX) by oxidative N-dealkylation using the cytochrome P-450 system and is the basis of the MEGX test of liver function (Bargetzi et al, 1989). Thus, both MEGX and galactose elimination capacity (GEC) require uptake and metabolism by the liver to quantify hepatic function, unlike IGC clearance, which relies on uptake alone. Serum MEGX levels are measured at regular intervals after infusion of 2% lidocaine hydrochloride at 1mg/kg over 1 to 2 minutes. Typical values at 15 minutes after infusion are between 60 and 96 ng/mL. Oellerich and colleagues (2001) noted that in patients with chronic liver disease awaiting transplantation, only those with a MEGX value less than 10 ng/mL died; Lee and colleagues (2005) used a cutoff level of 30 ng/mL as a predictor of prolonged hospital stay following hepatic resection.

Recently, preoperative MEGX levels at 30 minutes after administration of lidocaine also predicted postoperative liver failure and mortality in noncirrhotic patients following liver resection (Lorf et al, 2008). Ravaioli and colleagues (2003) found that MEGX levels below 25 ng/mL were associated with a higher level of liver dysfunction and postoperative complications in cirrhotic patients following hepatectomy.

Galactose Elimination Capacity

The galactose elimination capacity (GEC) is another dynamic liver function test that assesses hepatic metabolism in addition to uptake and elimination. Galactose is not protein bound and has up to 95% hepatic extraction. In this test, 50% galactose (0.5 g/kg) is administered intravenously, and its elimination is measured via serum at 20 to 50 minutes after injection. Urine and breath samples have also been utilized to assess GEC. A normal level is greater than 6 mg/kg/min. A comparison of GEC, MEGX, and ICG tests by Zoedler and colleagues (1995) revealed that GEC and ICG were best at predicting postoperative liver failure following hepatic resection. Redaelli and colleagues (2002) demonstrated that GEC was predictive of postoperative complications and liver failure in 258 patients undergoing hepatectomy, using a cutoff of less than 6 mg/kg/min to predict poorer outcome. GEC has also been demonstrated to correlate to severity of cirrhosis and predict complications in these patients (Merkel et al, 1992), but a paucity of data exists regarding GEC in liver transplantation. Nadalin and colleagues (2004) combined GEC with MRI volumetry in live-donor transplantation and found that GEC decreased 25% by day 30 and then increased to 125% by day 180.

Measurement of Predicted Postoperative Liver Volume

Volumetric Assessment of the Liver

Computed Tomography

CT volumetry is the most common means of predicting the volume of functional liver remnant following a proposed resection. It typically involves the ratio of resected volume to total liver volume (see Chapter 16). More sophisticated analyses include a three-dimensional reconstruction of images, often combined with CT angiography, to allow for better visualization of a tumor in relation to vascular and biliary structures, which can alter surgical plans related to resection margin and devascularized areas of the liver that would need to be incorporated into the resection (Yamanaka et al, 2007). Such images can help to better predict surgical resection planes and can allow for “virtual” simulations of the resections.

Shoup and colleagues (2003) found that in noncirrhotic patients who had less than 25% liver volume following resection, 90% of patients developed hepatic insufficiency, compared with 0% of patients who had more than 25% residual volume, using a volumetric analysis of CT scans. Schindl and colleagues (2005) confirmed these findings, reporting a cutoff of 26.6% residual volume, below which a high risk of hepatic dysfunction was found. Shirabe and colleagues (1999) reported that hepatic failure after resection in patients with cirrhosis was associated with a postoperative liver volume of less than 250 mL/m2. Other authors have combined volumetric analysis with ICG-15 scores and Child-Pugh scores to guide indications for resection (Kubota et al, 1997; Yigitler et al, 2003; Hsieh et al, 2006). The benefits of volumetric analysis is seen in the setting of preoperative PVE, which is typically used before extended resections that push patients to the limit in terms of size of residual liver remnant; PVE will be discussed in detail in Chapter 65, Chapter 93A, Chapter 93B . However, a number of investigators have noted that a remnant liver volume of 25% or less is associated with increased risk of postoperative hepatic failure and that PVE can provide an additional 10% to 15% parenchymal mass that can make resection safer (Vauthey et al, 2000; Hemming et al, 2003).

Magnetic resonance imaging (MRI) has also been used to evaluate patients before and after resection to predict outcome. Nadalin and colleagues (2004) combined MRI with GEC clearance and found that patients had a 61% volume loss following right hepatectomy in the setting of a live donor transplant, and that these patients recovered function within 10 days, yet this only represented 74% of initial liver size. GEC tests performed suggested that actual recovery of hepatic function was much slower than volume recovery.

The main drawback of volumetric imaging is that there is no information with regard to hepatocyte parenchymal function, other than response to PVE, because the calculated volume of liver mass does not necessarily correlate to liver function. This is exacerbated in situations of liver parenchymal dysfunction, such as toxic injury following chemotherapy, fibrosis, or cirrhosis, or simply older patient age (Wakabayashi et al, 2002). In patients with cirrhosis, the predicted remnant-liver cutoff for safe resection has been established by a number of investigators to be 40% in patients without cirrhosis, rather than 25%; the use of PVE in patients with cirrhosis also appears to be helpful (Azoulay et al, 2000; Hemming et al, 2003).

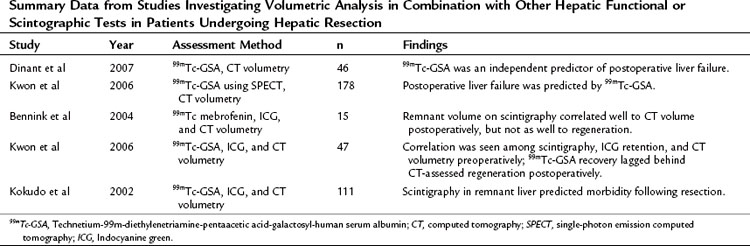

Ultimately, a combination of imaging volume assessment and grading of parenchymal function would represent the ideal test; however, results have been mixed (Table 2.4). Unfortunately, many of these multiassessment trials merely compared one test with another, rather than analyzing efficacy of the combination. The few trials that did specifically look at a combined approach found only moderate improvement over individual assessments in predicting outcomes after hepatectomy (Tu et al, 2007). It is apparent from these studies that, in patients analyzed by both volumetric and functional analyses, volume recovery precedes functional recovery after hepatectomy.

Other Measures of Liver Function

A number of other measures of liver function with varying degrees of success have been reported in the literature. Serum levels of hyaluronate, hepatocyte growth factor, prealbumin, retinol-binding protein, and lectin-cholesterol acyltransferase have all been described as simple markers of liver function (Mizuguchi et al, 2004; Katsuramaki et al, 2006). Many of these markers are used as nutritional indicators and as such are affected by overall nutritional status. However, following trends or following postoperative levels may be useful in determining how the liver reacts to resection. Sinusoidal endothelial cells take up hyaluronan and degrade it by a receptor-mediated mechanism with a short half-life of 2 to 5 minutes (Yachida et al, 2000). Thus serum levels of hyaluronate are a function of hepatic clearance, rather than mesenchymal cell production, and they have been shown to predict liver function in patients with cirrhosis. Serum hyaluronate levels can also predict hepatic regeneration following resection (Ogata et al, 1999; Mehta et al, 2008).

Elastography, the assessment of liver fibrosis using an intraoperative tactile sensor, has been proposed as a measure of liver function; however, this requires direct contact with the liver surface (Kusaka et al, 2000). Ultrasound-guided transabdominal elastography has been investigated as well to determine fibrosis or cirrhosis noninvasively, although minimal data are available in the prehepatectomy setting (Berzigotti et al, 2010; Laurent et al, 2008). Other methods of assessing functional liver reserve with varying degrees of success include preoperative measurement of portal vein pressures (Bruix et al, 1996), Doppler ultrasound measurement of hepatic flow (Annet et al, 2003; Lim et al, 2005), and bromosulfophthalein clearance (Yamanaka et al, 2001). The aminopyrine breath test (Merkel et al, 1992), antipyrine clearance (Sotaniemi et al, 1986), and caffeine clearance (Shrestha et al, 1997) are utilized infrequently, as they have largely been replaced by the less cumbersome and more predictive ICG clearance (Fan et al, 1995; Lau et al, 1997a; 1997b).

Conclusion

As surgeons have pushed the limits of extensive resections for metastatic and primary tumors of the liver and biliary tree, adequate assessment of liver reserve prior to resection, as well as the prediction of adequate functioning liver parenchyma following a major liver resection, has assumed great importance. Furthermore, with the increased utilization of laparoscopic approaches and a trend toward more limited anatomic resections, assessment of hepatic function must also be a dynamic and flexible one. Several major surgical organizations have established guidelines and consensus statements regarding indications and contraindications for surgical resection of liver tumors (Vauthey et al, 2006), and the main limitation of resection is no longer the size or number of tumors but rather the ability to leave adequate functioning liver behind, while preserving vascular and biliary support. This typically means leaving behind at least 20% of a normal liver or 40% of a diseased liver.

Recent liver-related mortality following hepatectomy in noncirrhotic patients has been reported to be as low as 2.8% in 1059 resections (Mullen et al, 2007), calling into question whether any routine preoperative functional liver assessment is necessary in patients without cirrhosis beyond standard clinical assessment, including preoperative labs and contrast-enhanced cross-sectional imaging. For patients with cirrhosis, some additional assessment of preoperative liver function would likely be useful in subcategorizing good-risk from poor-risk Child-Pugh class A patients and to identify a population of Child’s class B patients who would potentially do well following resection. The Child-Pugh score can be supplemented by the addition of volumetric measurements, ICG retention, MEGX clearance, and perhaps Tc-GSA scintigraphy. However, no test has been demonstrated to reliably augment or improve upon the Child-Pugh score alone, in the hands of an experienced surgeon, in the selection of patients for hepatic surgery. The ideal preoperative liver assessment would include a combination of functional and volumetric analyses with clear anatomic imaging; until such a test is available, clinical assessment by an experienced hepatic surgeon using the Child-Pugh score remains the gold standard.

Albers I, et al. Superiority of the Child–Pugh classification to quantitative liver function tests for assessing prognosis of liver cirrhosis. Scand J Gastroenterol. 1989;24(3):269-276.

Annet L, et al. Hepatic flow parameters measured with MR imaging and Doppler US: correlations with degree of cirrhosis and portal hypertension. Radiology. 2003;229(2):409-414.

Azoulay D, et al. Percutaneous portal vein embolization increases the feasibility and safety of major liver resection for hepatocellular carcinoma in injured liver. Ann Surg. 2000;232(5):665-672.

Bargetzi MJ, et al. Lidocaine metabolism in human liver microsomes by cytochrome P450IIIA4. Clin Pharmacol Ther. 1989;46(5):521-527.

Bennett JJ, et al. Assessment of hepatic reserve prior to hepatic resection. J Hepatobiliary Pancreat Surg. 2005;12(1):10-15.

Bennink RJ, et al. Preoperative assessment of postoperative remnant liver function using hepatobiliary scintigraphy. J Nucl Med. 2004;45(6):965-971.

Berzigotti A. Ultrasonographic evaluation of liver surface and transient elastography in clinically doubtful cirrhosis. J Hepatol. 2010;52(6):846-853.

Bruix J, et al. Surgical resection of hepatocellular carcinoma in cirrhotic patients: prognostic value of preoperative portal pressure. Gastroenterology. 1996;111(4):1018-1022.

Caesar J, et al. The use of indocyanine green in the measurement of hepatic blood flow and as a test of hepatic function. Clin Sci. 1961;21:43-57.

Cherrick GR, et al. Indocyanine green: observations on its physical properties, plasma decay, and hepatic extraction. J Clin Invest. 1960;39:592-600.

Child CG, et al. Surgery and portal hypertension. Major Probl Clin Surg. 1964;1:1-85.

Clements D, et al. Indocyanine green clearance in acute rejection after liver transplantation. Transplantation. 1988;46(3):383-385.

Conn HO. A peek at the Child–Turcotte classification. Hepatology. 1981;1(6):673-676.

Cucchetti A, et al. Recovery from liver failure after hepatectomy for hepatocellular carcinoma in cirrhosis: meaning of the model for end-stage liver disease. J Am Coll Surg. 2006;203(5):670-676.

Cucchetti A, et al. Impact of model for end-stage liver disease (MELD) score on prognosis after hepatectomy for hepatocellular carcinoma on cirrhosis. Liver Transpl. 2006;12(6):966-971.

Dinant S, et al. IL-10 attenuates hepatic I/R injury and promotes hepatocyte proliferation. J Surg Res. 2007;141(2):176-182.

Doci R, et al. Prognostic factors for survival and disease-free survival in hepatic metastases from colorectal cancer treated by resection. Tumori. 1995;81(3 Suppl:143-146.

Erdogan S, et al. Tc-99m MDP uptake in liver metastases from adenocarcinoma of the duodenum. Clin Nucl Med. 2004;29(2):125.

Fan ST, et al. Hospital mortality of major hepatectomy for hepatocellular carcinoma associated with cirrhosis. Arch Surg. 1995;130(2):198-203.

Faybik P, et al. Plasma disappearance rate of indocyanine green in liver dysfunction. Transplant Proc. 2006;38(3):801-802.

Franco D, et al. Resection of hepatocellular carcinomas: results in 72 European patients with cirrhosis. Gastroenterology. 1990;98(3):733-738.

Freeman RBJr, et al. The new liver allocation system: moving toward evidence-based transplantation policy. Liver Transpl. 2002;8(9):851-858.

Fujioka H, et al. Utility of technetium-99m-labeled-galactosyl human serum albumin scintigraphy for estimating the hepatic functional reserve. J Clin Gastroenterol. 1999;28(4):329-333.

Ha-Kawa SK, et al. Compartmental analysis of asialoglycoprotein receptor scintigraphy for quantitative measurement of liver function: a multicentre study. Eur J Nucl Med. 1997;24(2):130-137.

Hemming AW, et al. Preoperative portal vein embolization for extended hepatectomy. Ann Surg. 2003;237(5):686-691. discussion 691-693

Hirai I, et al. Evaluation of preoperative portal embolization for safe hepatectomy, with special reference to assessment of nonembolized lobe function with 99mTc-GSA SPECT scintigraphy. Surgery. 2003;133(5):495-506.

Hori T, et al. K(ICG) value, a reliable real-time estimator of graft function, accurately predicts outcomes in adult living-donor liver transplantation. Liver Transpl. 2006;12(4):605-613.

Hsieh CB, et al. Prediction of the risk of hepatic failure in patients with portal vein invasion hepatoma after hepatic resection. Eur J Surg Oncol. 2006;32(1):72-76.

Hwang EH, et al. Preoperative assessment of residual hepatic functional reserve using 99mTc DTPA-galactosyl-human serum albumin dynamic SPECT. J Nucl Med. 1999;40(10):1644-1651.

Igea J, et al. Indocyanine green clearance as a marker of graft function in liver transplantation. Transplant Proc. 1999;31(6):2447-2448.

Imamura H, et al. : One thousand fifty-six hepatectomies without mortality in 8 years. Arch Surg. 2003;138(11):1198-1206. discussion 1206

Jalan R, et al. A pilot study of indocyanine green clearance as an early predictor of graft function. Transplantation. 1994;58(2):196-200.

Jarnagin WR, et al. Improvement in perioperative outcome after hepatic resection: analysis of 1,803 consecutive cases over the past decade. Ann Surg. 2002;236(4):397-406. discussion 406-407

Jochum C, et al. Quantitative liver function tests in donors and recipients of living donor liver transplantation. Liver Transpl. 2006;12(4):544-549.

Kamath PS, et al. A model to predict survival in patients with end-stage liver disease. Hepatology. 2001;33(2):464-470.

Katsuramaki T, et al. Assessment of nutritional status and prediction of postoperative liver function from serum apolioprotein A-1 levels with hepatectomy. World J Surg. 2006;30(10):1886-1891.

Kita Y, et al. Liver allograft functional reserve estimated by total asialoglycoprotein receptor amount using Tc-GSA liver scintigraphy. Transplant Proc. 1998;30(7):3277-3278.

Kokudo N, et al. Predictors of successful hepatic resection: prognostic usefulness of hepatic asialoglycoprotein receptor analysis. World J Surg. 2002;26(11):1342-1347.

Kokudo N, et al. Clinical application of TcGSA. Nucl Med Biol. 2003;30(8):845-849.

Kubota K, et al. Measurement of liver volume and hepatic functional reserve as a guide to decision making in resectional surgery for hepatic tumors. Hepatology. 1997;26(5):1176-1181.

Kudo M, et al. In vivo estimates of hepatic binding protein concentration: correlation with classical indicators of hepatic functional reserve. Am J Gastroenterol. 1990;85(9):1142-1148.

Kusaka K, et al. Objective evaluation of liver consistency to estimate hepatic fibrosis and functional reserve for hepatectomy. J Am Coll Surg. 2000;191(1):47-53.

Kwon AH, et al. Use of technetium 99m diethylenetriamine-pentaacetic acid-galactosyl- human serum albumin liver scintigraphy in the evaluation of preoperative and postoperative hepatic functional reserve for hepatectomy. Surgery. 1995;117(4):429-434.

Kwon AH, et al. Preoperative determination of the surgical procedure for hepatectomy using technetium-99m-galactosyl human serum albumin (99mTc-GSA) liver scintigraphy. Hepatology. 1997;25(2):426-429.

Kwon AH, et al. Functional hepatic volume measured by technetium-99m-galactosyl-human serum albumin liver scintigraphy: comparison between hepatocyte volume and liver volume by computed tomography. Am J Gastroenterol. 2001;96(2):541-546.

Kwon AH, et al. Preoperative regional maximal removal rate of technetium-99m-galactosyl human serum albumin (GSA-Rmax) is useful for judging the safety of hepatic resection. Surgery. 2006;140(3):379-386.

Lam CM, et al. Major hepatectomy for hepatocellular carcinoma in patients with an unsatisfactory indocyanine green clearance test. Br J Surg. 1999;86(8):1012-1017.

Lamesch P, et al. Assessment of liver function in the early postoperative period after liver transplantation with ICG, MEGX, and GAL tests. Transplant Proc. 1990;22(4):1539-1541.

Lau H, et al. Evaluation of preoperative hepatic function in patients with hepatocellular carcinoma undergoing hepatectomy. Br J Surg. 1997;84(9):1255-1259.

Lau W, et al. A logical approach to hepatocellular carcinoma presenting with jaundice. Ann Surg. 1997;225(3):281-285.

Laurent C, et al. Non-invasive evaluation of liver fibrosis using transient elastography. J Hepatol. 2008;48(5):835-847.

Lee CF, et al. Using indocyanine green test to avoid post-hepatectomy liver dysfunction. Chang Gung Med J. 2007;30(4):333-338.

Lee WC, et al. Assessment of hepatic reserve for indication of hepatic resection: how I do it. J Hepatobiliary Pancreat Surg. 2005;12(1):23-26.

Lim AKP, et al. Can Doppler sonography grade the severity of hepatitis C–related liver disease? Am J Roentgenol. 2005;184(6):1848-1853.

Lorf T, et al. Prognostic value of the monoethylglycinexylidide (MEGX) test prior to liver resection. Hepatogastroenterology. 2008;55(82-83):539-543.

Mehta P, et al. Diagnostic accuracy of serum hyaluronic acid, FIBROSpect II, and YKL-40 for discriminating fibrosis stages in chronic hepatitis C. Am J Gastroenterol. 2008;103(4):928-936.

Merkel C, et al. Indocyanine green intrinsic hepatic clearance as a prognostic index of survival in patients with cirrhosis. J Hepatol. 1989;9(1):16-22.

Merkel C, et al. Aminopyrine breath test in the prognostic evaluation of patients with cirrhosis. Gut. 1992;33(6):836-842.

Mizuguchi T, et al. Serum hyaluronate level for predicting subclinical liver dysfunction after hepatectomy. World J Surg. 2004;28(10):971-976.

Mullen JT, et al. Hepatic insufficiency and mortality in 1,059 noncirrhotic patients undergoing major hepatectomy. J Am Coll Surg. 2007;204(5):854-862.

Nadalin S, et al. Volumetric and functional recovery of the liver after right hepatectomy for living donation. Liver Transpl. 2004;10(8):1024-1029.

Nagao T, et al. Hepatic resection for hepatocellular carcinoma: clinical features and long-term prognosis. Ann Surg. 1987;205(1):33-40.

Nagasue N, et al. Liver resection for hepatocellular carcinoma. Results of 229 consecutive patients during 11 years. Ann Surg. 1993;217(4):375-384.

Nanashima A, et al. Relationship between CT volumetry and functional liver volume using technetium-99m galactosyl serum albumin scintigraphy in patients undergoing preoperative portal vein embolization before major hepatectomy: a preliminary study. Dig Dis Sci. 2006;51(7):1190-1195.

Noguchi T, et al. Preoperative estimation of surgical risk of hepatectomy in cirrhotic patients. Hepatogastroenterology. 1990;37(2):165-171.

Nonami T, et al. Hepatic resection for hepatocellular carcinoma. Am J Surg. 1997;173(4):288-291.

Nordlinger B, et al. Surgical resection of colorectal carcinoma metastases to the liver: a prognostic scoring system to improve case selection based on 1,568 patients. Association Française de Chirurgie. Cancer. 1996;77(7):1254-1262.

Oellerich M, et al. Assessment of pretransplant prognosis in patients with cirrhosis. Transplantation. 1991;51(4):801-806.

Oellerich M, et al. The MEGX test: a tool for the real-time assessment of hepatic function. Ther Drug Monit. 2001;23(2):81-92.

Ogata T, et al. Serum hyaluronan as a predictor of hepatic regeneration after hepatectomy in humans. Eur J Clin Invest. 1999;29(9):780-785.

Ohwada S, et al. Perioperative real-time monitoring of indocyanine green clearance by pulse spectrophotometry predicts remnant liver functional reserve in resection of hepatocellular carcinoma. Br J Surg. 2006;93(3):339-346.

Okochi O, et al. ICG pulse spectrophotometry for perioperative liver function in hepatectomy. J Surg Res. 2002;103(1):109-113.

Plevris JN, et al. Indocyanine green clearance reflects reperfusion injury following liver transplantation and is an early predictor of graft function. J Hepatol. 1999;30(1):142-148.

Poon RT, et al. Improving perioperative outcome expands the role of hepatectomy in management of benign and malignant hepatobiliary diseases: analysis of 1,222 consecutive patients from a prospective database. Ann Surg. 2004;240(4):698-708. discussion 708-710

Pugh RN, et al. Transection of the oesophagus for bleeding oesophageal varices. Br J Surg. 1973;60(8):646-649.

Ravaioli M, et al. Operative risk by the lidocaine test (MEGX) in resected patients for HCC on cirrhosis. Hepatogastroenterology. 2003;50(53):1552-1555.

Redaelli CA, et al. Preoperative galactose elimination capacity predicts complications and survival after hepatic resection. Ann Surg. 2002;235(1):77-85.

Sakahara H, et al. Asialoglycoprotein receptor scintigraphy in evaluation of auxiliary partial orthotopic liver transplantation. J Nucl Med. 1999;40(9):1463-1467.

Satoh K, et al. 99m-Tc-GSA liver dynamic SPECT for the preoperative assessment of hepatectomy. Ann Nucl Med. 2003;17(1):61-67.

Sawada T, et al. Hepatectomy for metastatic liver tumor in patients with liver dysfunction. Hepatogastroenterology. 2007;54(80):2306-2309.

Schindl MJ, et al. The value of residual liver volume as a predictor of hepatic dysfunction and infection after major liver resection. Gut. 2005;54(2):289-296.

Shimada K, et al. Analysis of prognostic factors affecting survival after initial recurrence and treatment efficacy for recurrence in patients undergoing potentially curative hepatectomy for hepatocellular carcinoma. Ann Surg Oncol. 2007;14(8):2337-2347.

Shirabe K, et al. Postoperative liver failure after major hepatic resection for hepatocellular carcinoma in the modern era with special reference to remnant liver volume. J Am Coll Surg. 1999;188(3):304-309.

Shoup M, et al. Volumetric analysis predicts hepatic dysfunction in patients undergoing major liver resection. J Gastrointest Surg. 2003;7(3):325-330.

Shrestha R, et al. Quantitative liver function tests define the functional severity of liver disease in early-stage cirrhosis. Liver Transpl Surg. 1997;3(2):166-173.

Sotaniemi EA, et al. Fibrotic process and drug metabolism in alcoholic liver disease. Clin Pharmacol Ther. 1986;40(1):46-55.

Sugimoto H, et al. Early detection of liver failure after hepatectomy by indocyanine green elimination rate measured by pulse-dye densitometry. J Hepatobiliary Pancreat Surg. 2006;13(6):543-548.

Tanaka A, et al. Perioperative changes in hepatic function as assessed by asialoglycoprotein receptor indices by technetium 99m galactosyl human serum albumin. Hepatogastroenterology. 1999;46(25):369-375.

Teh SH, et al. Hepatic resection of hepatocellular carcinoma in patients with cirrhosis: Model of End-Stage Liver Disease (MELD) score predicts perioperative mortality. J Gastrointest Surg. 2005;9(9):1207-1215. discussion 1215

Tsubono T, et al. Indocyanine green elimination test in orthotopic liver recipients. Hepatology. 1996;24(5):1165-1171.

Tu R, et al. Assessment of hepatic functional reserve by cirrhosis grading and liver volume measurement using CT. World J Gastroenterol. 2007;13(29):3956-3961.

Vauthey J-N, et al. Standardized measurement of the future liver remnant prior to extended liver resection: methodology and clinical associations. Surgery. 2000;127(5):512-519.

Vauthey J-N, et al. AHPBA/SSO/SSAT consensus conference on hepatic colorectal metastases: rationale and overview of the conference. Ann Surg Oncol. 2006;13(10):1259-1260.

Wakabayashi H, et al. Effect of preoperative portal vein embolization on major hepatectomy for advanced-stage hepatocellular carcinomas in injured livers: a preliminary report. Surg Today. 1997;27(5):403-410.

Wakabayashi H, et al. Evaluation of the effect of age on functioning hepatocyte mass and liver blood flow using liver scintigraphy in preoperative estimations for surgical patients: comparison with CT volumetry. J Surg Res. 2002;106(2):246-253.

Wiesner RH, et al. MELD and PELD: application of survival models to liver allocation. Liver Transpl. 2001;7(7):567-580.

Yachida S, et al. Measurement of serum hyaluronate as a predictor of human liver failure after major hepatectomy. World J Surg. 2000;24(3):359-364.

Yamanaka J, et al. Impact of preoperative planning using virtual segmental volumetry on liver resection for hepatocellular carcinoma. World J Surg. 2007;31(6):1249-1255.

Yamanaka N, et al. Usefulness of monitoring the ICG retention rate as an early indicator of allograft function in liver transplantation. Transplant Proc. 1992;24(4):1614-1617.

Yamanaka N, et al. Hepatectomy and marked retention of indocyanine green and bromosulfophthalein. Hepatogastroenterology. 2001;48(41):1450-1452.

Yigitler C, et al. The small remnant liver after major liver resection: how common and how relevant? Liver Transpl. 2003;9(9):S18-S25.

Yumoto Y, et al. Estimation of remnant liver function before hepatectomy by means of technetium-99m-diethylenetriamine-pentaacetic acid galactosyl human albumin. Cancer Chemother Pharmacol. 1994;33(Suppl:S1-S6.

Zoedler T, et al. Evaluation of liver function tests to predict operative risk in liver surgery. HPB Surg. 1995;9(1):13-18.