Chapter 1 Seizure Prediction: Its Evolution and Therapeutic Potential

Introduction

Seizure prediction has a long history, starting in the 1970s1 with very small data sets looking only at preseizure (preictal) events minutes to seconds before seizures. It has progressed over the past almost 40 years up to current methods, which use mathematical to analyze continuous days of multiscale intracranial electroencephalogram (IEEG) recordings.2 Seizure prediction research, most important, has given hope for new warning and therapeutic devices to the 25% of epilepsy patients who cannot be successfully treated with drugs or surgery.3 One of the most insidious aspects of seizures is their unpredictability. In this light, in the absence of completely controlling a patient’s epilepsy, seizure prediction is an important aim of clinical management and treatment. From a broader view, seizure prediction research has also transformed the way we understand epilepsy and the basic mechanisms underlying seizure generation. Seizures were once viewed as isolated and abrupt events, but we now view them as processes that develop over time and space in epileptic networks. Thus, what started as a goal of predicting seizures for clinical applications has expanded into a field dedicated to understanding seizure generation.

The study of seizure generation necessarily encompasses a large collaborative effort between mathematicians, engineers, physicists, clinicians, and neuroscientists. However, it also requires large volumes of clinical data, which has led to more specific collaborations between epilepsy centers. These partnerships have come about through The International Seizure Prediction Group (ISPG), which held its Third Collaborative Workshop on Seizure Prediction in Freiburg, Germany, in October 2007. This workshop, and its two predecessors, allowed various groups to share computational methods, data, and ideas, and to focus on basic research and its translation to clinical relevance.

There is a large gulf between understanding how seizures are generated and the eventual goal of preventing seizure occurrence. Although studies in the literature have focused on prospectively testing seizure prediction methods,4,5 no study to date has yet confirmed the ability of a method to predict seizures better than random, or with accuracy sufficient for prospective clinical trials or eventual implementation in patients. Much of the blame for this performance failure resides in two important challenges, both of which have recently been solved. First, until recently, there was no consensus on the amount and quality of data required to conduct appropriate prospective prediction studies. Second, the statistical methods for designing experiments, and the metrics by which to judge successful algorithm performance, were not in place.2 Recent research has definitively advanced progress in these areas.6–8 Looking further ahead, for successful prediction devices to emerge, many technical questions will need to be resolved to design systems that not only warns the patient of a seizure but also intervenes to preempt it. For example, the intervention strategy (drug versus stimulation or other method), the clinical interface (sensors, classifiers, etc.), and the number and site of electrode placement are just a few of the problems under investigation that will need definitive solutions.

Historical Background

Epileptologists have long been aware that many patients with epilepsy know that their seizures are not abrupt in onset, and that they can often predict periods of time when seizures are more likely to occur. Many clinical findings support the idea that seizures are predictable. An increase in blood flow in the epileptic temporal lobe has been seen as much as 12 minutes before the onset of seizures.9,10 Clinical “prodromes” are noted in more than 50% of patients, according to Rajna et al.,11 with more refined measurements made recently by Haut et al.12,13 An increase in oxygen availability and blood oxygen level dependent signals on magnetic resonance imaging (MRI) have also been noted before seizures.14,15 Preictal changes in heart rate have been reported in several studies.16–18 However, these clinical findings do not reveal how long before a seizure the first changes in seizure generation occur or by what method a seizure might be predicted.

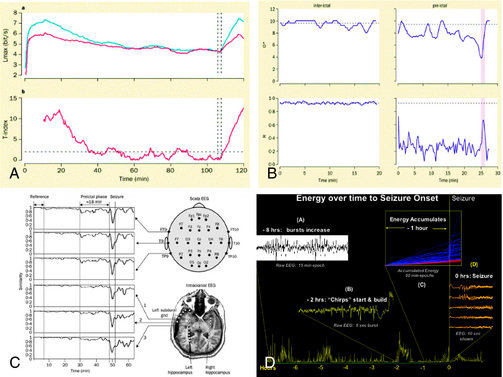

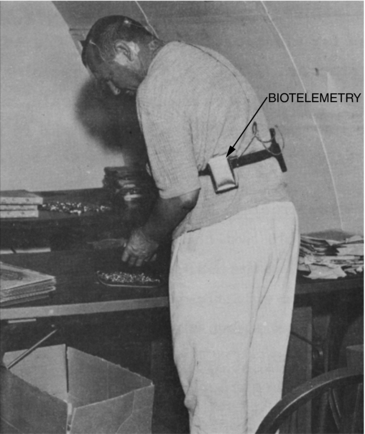

Seizure prediction research has evolved via diverse mathematical and engineering approaches, from its starting point in the 1970s. Viglione and Walsh not only implemented the first electronic classifier to solve this problem, in the form of an analog neural network, but they also created an actual device and tested it on patients with epilepsy19 (Figure 1-1). This device used scalp EEG electrodes to record signals for classification.1,20 Linear approaches looking at “absence” seizures using surface electrodes detected preictal changes up to 6 seconds before seizure onset.21 Another early study found differences between 1-minute preictal EEG epochs and control epochs.22 Using another approach, many studies have evaluated the rate of spikes in EEG before seizures with some23 finding predictive differences, but with the majority finding no predictive value.24,25 Novel mathematical approaches started with nonlinear systems using the largest Lyapunov exponent. They detected decreases in chaotic behavior in the minutes before temporal lobe epileptic seizures,26,27,28,29 with subsequent studies claiming prediction over hours5,30 (Figure 1-2). These and other early studies are important because they support the conceptual idea that seizures are not isolated events but rather develop over time.

Figure 1–1 A patient using one of the earliest seizure warning devices invented by Viglione et al.19 The biotelemetry unit was developed by Biocom, Inc., Culver City, CA.

However, there were methodological problems with these early studies. First, they focused on the preictal period and did not include prolonged interictal recordings; thus there was doubt about the specificity of the findings with regard to time. Second, they also lacked specificity with regard to space because they used univariate measures and one-channel recordings. Third, most of these studies employed small numbers of patients and small selected data sets. Finally, and perhaps most important, these studies did not have specific, well-thought-out statistical criteria for success and did not demonstrate performance measured against a chance predictor. These criteria would later reveal hidden biases in experimental data sets, not apparent in initial studies. The identification of these difficulties was one of the main products of the First International Collaborative Workshop on Seizure Prediction.2

As the field evolved, progressive attempts were made to conquer these challenges. The first problem was addressed in multiple studies in which a number of mathematical measures, starting with the correlation dimension,31 were shown to distinguish preictal and interictal periods using expanded data sets. The second problem was addressed with bivariate and multivariate measures, such as the largest Lyapunov exponent of two channels,32–34 and other methods fusing information from multiple channels and measures over time,4,35,36 simulated neuronal cell bodies,37 and measures for phase synchronization and cross correlation.36,38,39

But none of these studies addressed the lack of large data sets, containing prolonged interictal periods and sufficient numbers of seizures for classifier training and testing. As a result, their findings came into question in later studies carried out on unselected and more extended EEG recordings. Though the correlation dimension31 reliably demonstrated preseizure changes, these changes were also seen at other times, when seizures did not occur, a problem of many early (and present) methods. For this reason, although many methods were considered promising, they were found not to predict seizures, as verified by other investigators using alternative statistical methods.40,41 Statistical concerns also engendered criticism of studies involving prediction work invoking Lyapunov exponents.42 The predictive value of accumulated energy measures35 was also called into question by Harrison et al. and Maiwald et al.43,44 though these groups did not separate recordings into wakefulness and sleep, as was required in the original study. Therefore, the suitability of nonlinear mathematics,45 as well as linear methods in seizure prediction, was called into question.

These questions spawned The First International Collaborative Workshop on Seizure Prediction, held in Bonn, Germany, in 2002. The goal of the workshop was to give a tutorial on state-of-the-art methods for predicting seizures to students and investigators, to share scientific ideas, to compare different mathematical methods on a large common data set (five continuous intracranial EEG recordings from patients undergoing presurgical evaluation for medically intractable epilepsy), and to set goals and standards for future work in the field. The meeting attendees published a collection of papers summarizing this meeting, including a summary paper outlining a consensus on future research in seizure prediction, under the name of “The International Seizure Prediction Group.”2 Suggestions for future research studies in the field included: (1) that some of the first thorough easiest studies to be performed, to guarantee sampling data from networks involved in generating seizures, are recordings demonstrating should examine data from very focal disorders, such as unilateral temporal lobe; (2) that seizure prediction should focus on EEG events rather than events having only overt clinical symptoms; (3) that a top priority for the field was to establish an international database of high-quality intracranial EEG recordings for collaborative research; (4) that it was key that prolonged recordings, with statistically sufficient amounts of interictal and ictal data, be analyzed; and (5) that there needed to be better development of statistical models for seizure prediction and a consensus on what constitutes success.2 These last two issues were seen as major impediments to success in the area of seizure prediction over the years. Unfortunately, there was, at that time, no consensus on what statistical performance measures should be used to present results. There was also much debate about the predictability of seizures from patients with types of epilepsy other than that originating from the temporal lobe.

Results from the individual group presentations at the meeting were of interest. The conclusions of studies on this large test data set showed poor performance for univariate measures46,47 and better performance for bi- and multivariate measures.30,48,49 Nonlinear measures were not found to outperform linear ones.49 Of interest, the use of machine learning for automated feature exploration was introduced at this meeting, though this method did not yield results significantly better than other multivariate measures.4

Presentations at the meeting also included results on spatial contributions to seizure generation in the brain. The finding of preictal changes in channels remote from the seizure onset zone4,48,49 necessarily changed the conceptual framework for understanding seizure generation. Whereas earlier studies taught that seizures are not abrupt in onset, but more likely develop over time, these new studies demonstrated that even focal seizures likely develop across a network of distributed regions that are functionally connected. These findings challenged the more common accepted view of the epileptic “focus.”

The conference ended on a humorous but poignant note summing up the results of different prediction methods presented. This was a quote from what would later be a paper by the Bonn group: “The null hypothesis of the non-existence of a pre-seizure state cannot be disproved.”49,50 Papers detailing the research and presentations of each group at this workshop were later published in a special edition of the journal Clinical Neurophysiology (vol. 116, no. 3, 2005).

Gathering Momentum

There was a 5-year hiatus between the first Seizure Prediction Workshop and the second, which took place in Bethesda, Maryland, in February 2006 and was sponsored by the National Institute of Neurological Disease and Stroke (NINDS). During this period, investigators in the field continued to critically examine their work and developed new insights into why prior methods had failed to produce reliable algorithms that could predict seizures better than random chance. This introspection was released in a flurry of talks and papers leading up to and after the meeting. This body of work focused primarily on statistical concerns about current methods for tracking seizure generation over time,6–8,34,41,49,51 but also on clinical reports from patients to bolster seizure prediction work, reinforcing the concept that seizures are generated over time.12,52 The second workshop provided an explosive outlet for these ideas, with harsh criticism of much of the early work in this field. Rather than interpreting this as a failure of the field of seizure prediction to make progress, most investigators came away from the meeting with a renewed sense of focus and a feeling that tremendous progress had been made. One of these leaps forward was the acceptance of seizure generation as likely being a “stochastic process.” In this scheme there are periods of increased probability of seizure onset, which may or may not lead to seizures, depending on unknown internal and external factors. This focused investigators interested in seizure generation on forecasting probabilities, rather than specific events.

During the time leading up to the Third Collaborative Workshop on Seizure Prediction, held in Freiburg, Germany, at the end of September 2007, it became clear that the priority items for progress in the field, identified at the first workshop in 2001, had been aggressively pursued. There was now a consensus on statistical methods required to prove success at seizure prediction (see).6,7 Standards for data to be used in prediction experiments were also put forward, and a model of seizure generation as a stochastic process in distributed neuronal networks was growing in popularity. At the same time, there was increasing interest in the role of neurophysiologic markers for epileptic brain and in the spatial and temporal evolution leading up to seizures. The idea behind this work is that there are particular neurophysiological markers of an epileptic brain that appear interictally and that may evolve in their temporal and spatial distribution as the probability of seizure increases.35 In particular, attention began to focus on high-frequency oscillations (HFOs) during this period.

High-Frequency Oscillations, Broad-Band EEG, and Seizure Generation

The idea of what constitutes a pathological HFO in human electrophysiology is a concept that is evolving over time. In clinical EEG studies from patients implanted with intracranial macroelectrodes, the frequency spectrum of these oscillations is limited by signal filtering on clinical systems. This is due to hard-wired antialiasing filters set at 100 Hz in many systems, despite sample rates that can go much higher than 250 Hz.53,54 Other studies using custom recording systems with macro- and microelectrodes in humans and animal models of epilepsy have observed much higher frequency oscillations in the 100 to 200 Hz range. Investigators have called these oscillations “ripples,” which are felt to be seen in both normal and abnormal processes. Presumably pathological oscillations, called “fast ripples (FR),” were also identified at ∼500 Hz or higher55–57 (Figure 1-3). Some investigators are actively involved in mapping oscillations in and below the ripple frequencies in normal cognitive processes, such as spatial navigation, memory storage, and recall.58–62 The potential role of ripples in network dysfunction in epilepsy is not yet well defined, and there appears to be considerable overlap in the distribution of these two populations of events (ripples and fast ripples), without a clear frequency cutoff to distinguish them.63 Various studies show an increase in HFO and broad-band fast activity preictally and at seizure onset.53,64–66 Very high frequency (VHF) oscillations or fast ripples, between 250 and 500 Hz, are postulated to be pathological because they are seen primarily in epileptogenic areas. They are proposed to represent hypersynchronous interconnected neurons capable of generating seizures.67 VHF oscillations appear to be present in patients with mesial temporal lobe epilepsy before the onset of seizures.68 In temporal lobe and neocortical epilepsy, HFO activity is postulated to play an important role in seizure generation.53,63,69,70 The use of HFO activity to predict seizures is an area of important future research.71 Automated methods for detecting HFO activity in localizing epileptic networks is an active area of investigation.67,72,73 It is important to note that there is extensive literature exploring these oscillations in animal models of epilepsy spanning back into the 1960s and earlier, up to the present.74–78 A discussion of this rich literature is beyond the scope of this brief review. It is important, however, to note that interest in HFOs and epilepsy extends well beyond the temporal lobe to the neocortex and deeper structures, such as the thalamus. The role of the thalamus in generating these oscillations and their relationship to epilepsy is a particular area of intense interest.79,80 When pathological conditions occur, such as in absence epilepsy, these circuits can be co-opted to create hypersynchronous activity.81 However, the exact relationship of this research to spontaneous partial seizures, and our ability to predict these events, is still undetermined.

Therapeutics, Devices, and Seizure Prediction

One of the strongest motivations for seizure prediction research is its potential for driving a therapeutic device. Focal brain stimulation for the treatment of epilepsy has been explored for over 20 years.82–84 Early trials were often uncontrolled and thus inconclusive, and it was only later, when the Food and Drug Administration (FDA) instituted stricter standards and regulation of medical devices, that device safety, evaluation, and testing have considerably improved.85

The Vagus Nerve Stimulator (VNS, Cyberonics, Inc.), approved by the FDA in 1997 for pharmaco-resistant partial epilepsy, is the first antiepileptic device to be approved by this body and be widely used. Its operation is an example of a chronic stimulation open-loop protocol. The device functions by periodically stimulating the vagus nerve with no direct feedback to modulate operation. In early trials, 30 to 40% of patients with medically intractable seizures experienced seizure reduction of approximately 50% with this therapy, though less than one patient in ten became seizure-free.86–88

Driven by proof of principle that devices such as the VNS can reduce or stop seizures, and by the great success of analogous devices in cardiology, there is currently an explosion of research and development for neuro-implantables to treat epilepsy. The SANTE trial (Medtronic, Inc.) employs the same Deep Brain Stimulator (DBS) used for treating Parkinson’s disease (Figure 1-4). It activates in an open-loop protocol to deliver intermittent electrical stimulation to the anterior nucleus of the thalamus (ANT) for treating partial onset epilepsy.89,90 Small trials have shown improvement in seizure severity.91,92 A multicenter pivotal clinical trial is currently underway to evaluate the device’s efficacy. Early attempts to make this therapy responsive, or “closed loop,” have been inconclusive.93 Continuous hippocampal electrical stimulation for mesial temporal lobe epilepsy has also been attempted, with a reported reduction in seizure frequency of up to 50%, though this remains an active area of investigation.94–97

Over the past 5 years there has been increasing interest in developing true “closed-loop” devices for treating epilepsy. Although there is some interest in making existing technologies, such as the VNS and ANTs stimulators, closed loop, more attention has been paid to technologies triggering direct cortical or hippocampal stimulation in response to electrocorticographic recordings from subdural strip and hippocampal depth electrodes. In such systems, a seizure detection algorithm is developed, validated, and implemented in a closed-loop stimulation protocol to prevent seizures.98 In these protocols, focal stimulation is only triggered when needed to abort a seizure. An intracranial Responsive Neurostimulator (RNS, NeuroPace, Inc., Mountain View, CA) is currently being investigated in a multicenter pivotal clinical trial for treatment of medically refractory partial onset seizures. This closed-loop protocol triggers electrical stimulation when the input algorithm detects that a seizure has started or is imminent on the intracranial EEG.98 There is some suggestion that triggering responsive stimulation earlier in the onset of seizure activity on the EEG may improve system efficacy (personal communication), though this has not been validated. An early study found a 45% decrease in seizures in seven out of eight patients99 and in an initial safety study not designed to test efficacy.100 Newer strategies for responsive stimulation err on the side of early detection, with relatively low specificity, in the hope of stimulating and potentially arresting seizures earlier. This is done at the expense of significant numbers of false-positive stimulations. The approach is based on the premise that there is no evidence that pulse stimulations that do not cause after-discharges have adverse effects on brain. These conclusions are based on extrapolations from the animal literature, as there are no human studies available to draw on.101 Some investigators propose that stimulation based on “seizure prediction,” or algorithms that identify periods of increased probability of seizure onset, may be an even more effective strategy. Such paradigms are an area of intense investigation.

It is important to note that there is considerable research and commercial activity in the area of other forms of responsive stimulation and therapy for refractory seizures. Among modalities being investigated are open-loop and responsive trigeminal nerve stimulation,102,103 focal cooling,104 electrical field stimulation,105,106 and a variety of other therapeutic modalities.

Seizure Prediction Devices and Their Therapeutic Potential

Seizure prediction and antiepileptic device research are on trajectories that are already intersecting. Now that there is agreement on statistical methodologies to properly assess prediction algorithms, it is likely that many previously discarded methods will be found to predict seizures better than randomly. The question then becomes, what kind of performance is required for clinical utility? Such a discussion is complex and depends on the application. For example, a device solely dedicated to warning of impending seizures will need to perform with a high degree of accuracy. Too many false-positive alarms will cause the device to be ignored. Rare false-negative warnings have the potential to be more dangerous, if patients engage in risky behavior reinforced by overconfidence in less-than-perfect device performance still, given that the unpredictability of seizures is perhaps the most common complaints in epilepsy patients, there is enormous potential to improve quality of life in those affected through warning systems alone. This remains an area of intense research. Systems that do not inform patients, but trigger therapeutic intervention that is not apparent to their hosts, may be easier to implement. This is because these devices can theoretically embody less-accurate performance, with regard to false-positive rates, if there is no penalty for false detections. False-positive detections may trigger unneeded intervention, but this is acceptable, if no short- or long-term side effects result. Again, the rationale for implementing seizure prediction in such devices is the hypothesis that earlier intervention in the process of seizure generation will be more effective in preventing clinical events than later interventions. Prediction devices, despite their increased complexity, also have theoretical advantages over open-loop systems, or those based on detection, in that they could potentially require less power, saving stimulation or other interventions for when they are needed, rather than continuously. Other complex issues, such as device cost, training, and maintenance, are beyond the scope of this discussion.

The earliest seizure warning device, crafted by Viglione in the 1970s, monitored standard scalp EEG and used an analog implementation of a neural network classifier for seizure prediction.1 Though rigorous statistical validation of device performance is not documented, the inventor was encouraged by his results. Research and now standard clinical practice demonstrate that intracranial EEG detects seizure onset 10 seconds or more in advance of scalp recordings, and that implanted electrodes are more stable and their recordings more artifact free than their scalp counterparts.107 For this reason, research in seizure prediction and antiepileptic devices has focused primarily on IEEG. IEEG electrodes are now frequently chronically implanted and used for sensing and stimulation in therapeutic neurological devices. Early results assessing long-term function and tissue compatibility are encouraging. These results suggest that early seizure prediction devices are likely to process IEEG and may potentially use the same electrodes for sensing signals and delivering therapy, if electrical stimulation remains the intervention of choice, due to its relative simplicity. Other sensors capable of recording intracranial-quality signals without requiring entry into the subdural space are also an intense area of investigation.

The next question becomes what information to sense, where to place sensors, and where, when, and how to deliver therapy. These remain open questions and very active areas of research. There is encouraging evidence that pathological HFOs may help identify regions important to seizure generation in epileptic networks,55,63 though thorough studies of continuous human broad-band EEG for this purpose are just getting under way. Other methods for localizing epileptic networks based on functional imaging are also encouraging, but remain experimental as well. Early work measuring very high frequency IEEG, particularly activity from individual neurons transduced by arrays of microelectrodes in regions felt to be important to seizure generation, is under way, and there is early evidence to suggest that HFOs and unit activity will allow seizures to be predicted or detected earlier than field potential recordings. This work is, as of yet, too preliminary to draw conclusions.

Finally, it is not yet clear how intervention will be controlled by seizure prediction algorithms, as algorithm performance will be the primary determinant of this implementation. Several strategies have been suggested. In one, therapeutic intervention will escalate as the probability of seizure onset increases over time. In this scheme, more benign therapy, such as low current, very confined electrical stimulation, might be applied with modest increases in the probability of seizure onset and then increase in its spatial delivery and intensity if this probability continues to rise over time. Should seizure onset be detected in this scheme, maximal therapy, perhaps coupled with local drug infusion, might be triggered as a last resort, albeit with more potential side effects, to stop imminent clinical symptoms.35

Another strategy that has been suggested models developing seizures as a critical system, similar to avalanches and volcanoes. In this scheme, increasing probability of seizure onset is likened to snow gathering on avalanche-prone mountains. When this probability rises beyond a certain threshold, electrical stimulation is applied to provoke small, subclinical seizure-like events to release “energy” in the system. In this way a large, potentially convulsive event might be channeled into a series of small, subclinical electrical discharges that are not associated with any symptoms.108

Future Directions

The actual embodiment of antiepileptic devices based on seizure prediction will be determined by the results of ongoing research focused on determining what signals to monitor, how, when, and where to deliver preemptive therapy to abort seizures, and how to optimize seizure prediction performance. This assumes that prospective seizure prediction can be demonstrated, which the authors think is likely, despite the large amount of time devoted to this research over the past 20 years. This optimism is the result of recent breakthroughs in the major areas of direction for the field, identified first at the ISPG’s Bonn Workshop in 2001. It is our opinion, however, that the greatest hope for new seizure prediction-based devices will come in the form of collaborations. The International Collaborative Workshops on Seizure Prediction and the International Seizure Prediction Group provide a framework for this research and its clinical translation, which continues to drive it forward. Its next project is a large international database of human and animal model intracranial data on which prospective algorithms can be tested. This joint project will continue to accelerate the group’s efforts and its progress toward making antiepileptic devices based on the neurophysiology of seizure generation a clinical reality.

1. Viglione S, Ordon V, Martin W, Kesler C, inventors; US government, assignee. Epileptic seizure warning system. US patent 3 863 625. November 2, 1973.

2. Lehnertz K, Litt B. The First International Collaborative Workshop on Seizure Prediction: summary and data description. Clin Neurophysiol. 2005;116(3):493-505.

3. Annegers JF. The Epidemiology in Epilepsy. Baltimore: Williams and Wilkins, 1996.

4. D’Alessandro M, Vachtsevanos G, Esteller R, et al. A multi-feature and multi-channel univariate selection process for seizure prediction. Clin Neurophysiol. 2005;116(3):506-516.

5. Iasemidis LD, Shiau DS, Chaovalitwongse W, et al. Adaptive epileptic seizure prediction system. IEEE Trans Biomed Eng. 2003;50(5):616-627.

6. Mormann F, Andrzejak RG, Elger CE, Lehnertz K. Seizure prediction: the long and winding road. Brain. 2007;130(Pt 2):314-333.

7. Wong S, Gardner AB, Krieger AM, Litt B. A stochastic framework for evaluating seizure prediction algorithms using hidden Markov models. J Neurophysiol. 2007;97(3):2525-2532.

8. Winterhalder M, Schelter B, Schulze-Bonhage A, Timmer J. Comment on: “Performance of a seizure warning algorithm based on the dynamics of intracranial EEG.”. Epilepsy Res. 2006;72(1):80-81. discussion 82-84

9. Baumgartner C, Serles W, Leutmezer F, et al. Preictal SPECT in temporal lobe epilepsy: regional cerebral blood flow is increased prior to electroencephalography-seizure onset. J Nucl Med. 1998;39(6):978-982.

10. Weinand ME, Carter LP, el-Saadany WF, Sioutos PJ, Labiner DM, Oommen KJ. Cerebral blood flow and temporal lobe epileptogenicity. J Neurosurg. 1997;86(2):226-232.

11. Rajna P, Clemens B, Csibri E, et al. Hungarian multicentre epidemiologic study of the warning and initial symptoms (prodrome, aura) of epileptic seizures. Seizure. 1997;6(5):361-368.

12. Haut SR, Hall CB, LeValley AJ, Lipton RB. Can patients with epilepsy predict their seizures? Neurology. 2007;68(4):262-266.

13. Haut SR, Hall CB, Masur J, Lipton RB. Seizure occurrence: precipitants and prediction. Neurology. 2007;69(20):1905-1910.

14. Adelson PD, Nemoto E, Scheuer M, Painter M, Morgan J, Yonas H. Noninvasive continuous monitoring of cerebral oxygenation periictally using near-infrared spectroscopy: a preliminary report. Epilepsia. 1999;40(11):1484-1489.

15. Federico P, Abbott DF, Briellmann RS, Harvey AS, Jackson GD. Functional MRI of the pre-ictal state. Brain. 2005;128(Pt 8):1811-1817.

16. Delamont RS, Julu PO, Jamal GA. Changes in a measure of cardiac vagal activity before and after epileptic seizures. Epilepsy Res. 1999;35(2):87-94.

17. Kerem DH, Geva AB. Forecasting epilepsy from the heart rate signal. Med Biol Eng Comput. 2005;43(2):230-239.

18. Novak VV, Reeves LA, Novak P, Low AP, Sharbrough WF. Time-frequency mapping of R-R interval during complex partial seizures of temporal lobe origin. J Auton Nerv Syst. 1999;77(2-3):195-202.

19. Viglione SS, Ordon VA, et al. Detection and Prediction of Epileptic Seizures: McDonnell Douglas Astronautics Company; November 1972. Contract No. SRS-ORDT-71-50.

20. Viglione SS, Walsh GO. Proceedings: Epileptic seizure prediction. Electroencephalogr Clin Neurophysiol. 1975;39(4):435-436.

21. Rogowski Z, Gath I, Bental E. On the prediction of epileptic seizures. Biol Cybern. 1981;42(1):9-15.

22. Siegel A, Grady CL, Mirsky AF. Prediction of spike-wave bursts in absence epilepsy by EEG power-spectrum signals. Epilepsia. 1982;23(1):47-60.

23. Lange HH, Lieb JP, Engel JJr, Crandall PH. Temporo-spatial patterns of pre-ictal spike activity in human temporal lobe epilepsy. Electroencephalogr Clin Neurophysiol. 1983;56(6):543-555.

24. Gotman J, Marciani MG. Electroencephalographic spiking activity, drug levels, and seizure occurrence in epileptic patients. Ann Neurol. 1985;17(6):597-603.

25. Katz A, Marks DA, McCarthy G, Spencer SS. Does interictal spiking change prior to seizures? Electroencephalogr Clin Neurophysiol. 1991;79(2):153-156.

26. Iasemidis LD, Sackellares JC, Zaveri HP, Williams WJ. Phase space topography and the Lyapunov exponent of electrocorticograms in partial seizures. Brain Topogr. Spring. 1990;2(3):187-201.

27. Le Van Quyen M, Martinerie J, Navarro V, et al. Anticipation of epileptic seizures from standard EEG recordings. Lancet. 2001;357(9251):183-188.

28. Litt B, Lehnertz K. Seizure prediction and the preseizure period. Curr Opin Neurol. 2002;15(2):173-177.

29. Litt B, Echauz J. Prediction of epileptic seizures. Lancet Neurol. 2002;1(1):22-30.

30. Iasemidis LD, Shiau DS, Pardalos PM, et al. Long-term prospective on-line real-time seizure prediction. Clin Neurophysiol. 2005;116(3):532-544.

31. Elger CE, Lehnertz K. Seizure prediction by non-linear time series analysis of brain electrical activity. Eur J Neurosci. 1998;10(2):786-789.

32. Iasemidis LD, Pardalos PM, Sackellares JC, Shiau DS. Quadratic binary programming and dynamical system approach to determine the predictability of epileptic seizures. J Comb Optim. 2001;5:9-26.

33. Jerger KK, Weinstein SL, Sauer T, Schiff SJ. Multivariate linear discrimination of seizures. Clin Neurophysiol. 2005;116(3):545-551.

34. Schelter B, Winterhalder M, Maiwald T, et al. Testing statistical significance of multivariate time series analysis techniques for epileptic seizure prediction. Chaos. 16(1), 2006. 013108

35. Litt B, Esteller R, Echauz J, et al. Epileptic seizures may begin hours in advance of clinical onset: a report of five patients. Neuron. 2001;30(1):51-64.

36. Mormann F, Lehnertz K, David P, Elger CE. Mean phase coherence as a measure for phase synchronization and its applications to the EEG of epilepsy patients. Physica D. 2000;144:358-369.

37. Schindler K, Wiest R, Kollar M, Donati F. EEG analysis with simulated neuronal cell models helps to detect pre-seizure changes. Clin Neurophysiol. 2002;113(4):604-614.

38. Mormann F, Andrzejak RG, Kreuz T, et al. Automated detection of a preseizure state based on a decrease in synchronization in intracranial electroencephalogram recordings from epilepsy patients. Phys Rev E Stat Nonlin Soft Matter Phys. 67(2 Pt 1), 2003. 021912

39. Mormann F, Kreuz T, Andrzejak RG, David P, Lehnertz K, Elger CE. Epileptic seizures are preceded by a decrease in synchronization. Epilepsy Res. 2003;53(3):173-185.

40. Aschenbrenner-Scheibe R, Maiwald T, Winterhalder M, Voss HU, Timmer J, Schulze-Bonhage A. How well can epileptic seizures be predicted? An evaluation of a nonlinear method. Brain. 2003;126(Pt 12):2616-2626.

41. Harrison MA, Osorio I, Frei MG, Asuri S, Lai YC. Correlation dimension and integral do not predict epileptic seizures. Chaos. 15(3), 2005. 33106

42. Lai YC, Harrison MA, Frei MG, Osorio I. Inability of Lyapunov exponents to predict epileptic seizures. Phys Rev Lett. 91(6), 2003. 068102

43. Harrison MA, Frei MG, Osorio I. Accumulated energy revisited. Clin Neurophysiol. 2005;116(3):527-531.

44. Maiwald T, Winterhalder M, Aschenbrenner-Scheibe R, Voss HU, Schulze-Bonhage A, Timmer J. Comparison of three nonlinear seizure prediction methods by means of the seizure prediction characteristic. Physica D. 2004;194:357-368.

45. McSharry PE, Smith LA, Tarassenko L. Prediction of epileptic seizures: are nonlinear methods relevant? Nat Med. 2003;9(3):241-242. author reply 242

46. Esteller R, Echauz J, D’Alessandro M, et al. Continuous energy variation during the seizure cycle: towards an on-line accumulated energy. Clin Neurophysiol. 2005;116(3):517-526.

47. Jouny CC, Franaszczuk PJ, Bergey GK. Signal complexity and synchrony of epileptic seizures: is there an identifiable preictal period? Clin Neurophysiol. 2005;116(3):552-558.

48. Le Van Quyen M, Soss J, Navarro V, et al. Preictal state identification by synchronization changes in long-term intracranial EEG recordings. Clin Neurophysiol. 2005;116(3):559-568.

49. Mormann F, Kreuz T, Rieke C, et al. On the predictability of epileptic seizures. Clin Neurophysiol. 2005;116(3):569-587.

50. Andrzejak RG, Mormann F, Kreuz T, et al. Testing the null hypothesis of the nonexistence of a preseizure state. Phys Rev E Stat Nonlin Soft Matter Phys. 67(1 Pt 1), 2003. 010901

51. Schelter B, Winterhalder M, Feldwisch genannt Drentrup H, et al. Seizure prediction: the impact of long prediction horizons. Epilepsy Res. 2007;73(2):213-217.

52. Schulze-Bonhage A, Kurth C, Carius A, Steinhoff BJ, Mayer T. Seizure anticipation by patients with focal and generalized epilepsy: a multicentre assessment of premonitory symptoms. Epilepsy Res. 2006;70(1):83-88.

53. Worrell GA, Parish L, Cranstoun SD, Jonas R, Baltuch G, Litt B. High-frequency oscillations and seizure generation in neocortical epilepsy. Brain. 2004;127(Pt 7):1496-1506.

54. Niederhauser JJ, Esteller R, Echauz J, Vachtsevanos G, Litt B. Detection of seizure precursors from depth-EEG using a sign periodogram transform. IEEE Trans Biomed Eng. 2003;50(4):449-458.

55. Bragin A, Engel JJr, Wilson CL, FriedI, Buzsaki G. High-frequency oscillations in human brain. Hippocampus. 1999;9(2):137-142.

56. Staba RJ, Frighetto L, Behnke EJ, et al. Increased fast ripple to ripple ratios correlate with reduced hippocampal volumes and neuron loss in temporal lobe epilepsy patients. Epilepsia. 2007.

57. Staba RJ, Wilson CL, Bragin A, Jhung D, Fried I, Engel JJr. High-frequency oscillations recorded in human medial temporal lobe during sleep. Ann Neurol. 2004;56(1):108-115.

58. Ekstrom A, Viskontas I, Kahana M, et al. Contrasting roles of neural firing rate and local field potentials in human memory. Hippocampus. 2007;17(8):606-617.

59. Ekstrom AD, Caplan JB, Ho E, Shattuck K, Fried I, Kahana MJ. Human hippocampal theta activity during virtual navigation. Hippocampus. 2005;15(7):881-889.

60. Ekstrom AD, Kahana MJ, Caplan JB, et al. Cellular networks underlying human spatial navigation. Nature. 2003;425(6954):184-188.

61. Kahana MJ. The cognitive correlates of human brain oscillations. J Neurosci. 2006;26(6):1669-1672.

62. Jacobs J, Kahana MJ, Ekstrom AD, Fried I. Brain oscillations control timing of single-neuron activity in humans. J Neurosci. 2007;27(14):3839-3844.

63. Worrell G, Gardner A, Stead M, et al. High-frequency oscillations in human temporal lobe: simultaneous microwire and clinical electrode recordings. Brain. 2008;131:928-937.

64. Allen PJ, Fish DR, Smith SJ. Very high-frequency rhythmic activity during SEEG suppression in frontal lobe epilepsy. Electroencephalogr Clin Neurophysiol. 1992;82(2):155-159.

65. Fisher RS, Webber WR, Lesser RP, Arroyo S, Uematsu S. High-frequency EEG activity at the start of seizures. J Clin Neurophysiol. 1992;9(3):441-448.

66. Huang CM, White LEJr. High-frequency components in epileptiform EEG. J Neurosci Methods. 1989;30(3):197-201.

67. Staba RJ, Wilson CL, Bragin A, Fried I, Engel JJr. Quantitative analysis of high-frequency oscillations (80-500 Hz) recorded in human epileptic hippocampus and entorhinal cortex. J Neurophysiol. 2002;88(4):1743-1752.

68. Jirsch JD, Urrestarazu E, LeVan P, Olivier A, Dubeau F, Gotman J. High-frequency oscillations during human focal seizures. Brain. 2006;129(Pt 6):1593-1608.

69. Bragin A, Mody I, Wilson CL, Engel JJr. Local generation of fast ripples in epileptic brain. J Neurosci. 2002;22(5):2012-2021.

70. Schiff SJ, Colella D, Jacyna GM, et al. Brain chirps: spectrographic signatures of epileptic seizures. Clin Neurophysiol. 2000;111(6):953-958.

71. Rampp S, Stefan H. Fast activity as a surrogate marker of epileptic network function? Clin Neurophysiol. 2006;117(10):2111-2117.

72. Firpi H, Smart O, Worrell G, Marsh E, Dlugos D, Litt B. High-frequency oscillations detected in epileptic networks using swarmed neural-network features. Ann Biomed Eng. 2007;35(9):1573-1584.

73. Gardner AB, Worrell GA, Marsh E, Dlugos D, Litt B. Human and automated detection of high-frequency oscillations in clinical intracranial EEG recordings. Clin Neurophysiol. 2007;118(5):1134-1143.

74. Dichter M, Spencer WA. Hippocampal penicillin “spike” discharge: epileptic neuron or epileptic aggregate? Neurology. 1968;18(3):282-283.

75. Dichter M, Spencer WA. Penicillin-induced interictal discharges from the cat hippocampus. II. Mechanisms underlying origin and restriction. J Neurophysiol. 1969;32(5):663-687.

76. Dichter M, Spencer WA. Penicillin-induced interictal discharges from the cat hippocampus. I. Characteristics and topographical features. J Neurophysiol. 1969;32(5):649-662.

77. Dzhala VI, Staley KJ. Transition from interictal to ictal activity in limbic networks in vitro. J Neurosci. 2003;23(21):7873-7880.

78. Dzhala VI, Staley KJ. Mechanisms of fast ripples in the hippocampus. J Neurosci. 2004;24(40):8896-9906.

79. McCormick DA, Contreras D. On the cellular and network bases of epileptic seizures. Annu Rev Physiol. 2001;63:815-846.

80. Traub RD, Contreras D, Cunningham MO, et al. Single-column thalamocortical network model exhibiting gamma oscillations, sleep spindles, and epileptogenic bursts. J Neurophysiol. 2005;93(4):2194-2232.

81. Huguenard JR, McCormick DA. Thalamic synchrony and dynamic regulation of global forebrain oscillations. Trends Neurosci. 2007;30(7):350-356.

82. Durand D. Electrical stimulation can inhibit synchronized neuronal activity. Brain Res. 1986;382(1):139-144.

83. Litt B, Baltuch G. Brain Stimulation for Epilepsy. Epilepsy Behav. 2001;2:S61-S67.

84. Theodore WH, Fisher RS. Brain stimulation for epilepsy. Lancet Neurol. 2004;3(2):111-118.

85. Litt B. Evaluating devices for treating epilepsy. Epilepsia. 2003;44(Suppl 7):30-37.

86. Fisher RS, Handforth A. Reassessment: vagus nerve stimulation for epilepsy: a report of the Therapeutics and Technology Assessment Subcommittee of the American Academy of Neurology. Neurology. 1999;53(4):666-669.

87. Fisher RS, Krauss GL, Ramsay E, Laxer K, Gates J. Assessment of vagus nerve stimulation for epilepsy: report of the Therapeutics and Technology Assessment Subcommittee of the American Academy of Neurology. Neurology. 1997;49(1):293-297.

88. Morrell M. Brain stimulation for epilepsy: can scheduled or responsive neurostimulation stop seizures? Current Opinion in Neurology. 2006;19(2):164-168.

89. Graves NM, Fisher RS. Neurostimulation for epilepsy, including a pilot study of anterior nucleus stimulation. Clin Neurosurg. 2005;52:127-134.

90. Mirski MA, Rossell LA, Terry JB, Fisher RS. Anticonvulsant effect of anterior thalamic high frequency electrical stimulation in the rat. Epilepsy Res. 1997;28(2):89-100.

91. Hodaie M, Wennberg RA, Dostrovsky JO, Lozano AM. Chronic anterior thalamus stimulation for intractable epilepsy. Epilepsia. 2002;43(6):603-608.

92. Kerrigan JF, Litt B, Fisher RS, et al. Electrical stimulation of the anterior nucleus of the thalamus for the treatment of intractable epilepsy. Epilepsia. 2004;45(4):346-354.

93. Osorio I, Frei MG, Sunderam S, et al. Automated seizure abatement in humans using electrical stimulation. Ann Neurol. 2005;57(2):258-268.

94. Tellez-Zenteno JF, McLachlan RS, Parrent A, Kubu CS, Wiebe S. Hippocampal electrical stimulation in mesial temporal lobe epilepsy. Neurology. 2006;66(10):1490-1494.

95. Velasco AL, Velasco F, Velasco M, Trejo D, Castro G, Carrillo-Ruiz JD. Electrical stimulation of the hippocampal epileptic foci for seizure control: a double-blind, long-term follow-up study. Epilepsia. 2007;48(10):1895-1903.

96. Vonck K, Boon P, Achten E, De Reuck J, Caemaert J. Long-term amygdalohippocampal stimulation for refractory temporal lobe epilepsy. Ann Neurol. 2002;52(5):556-565.

97. Vonck K, Boon P, Claeys P, Dedeurwaerdere S, Achten R, Van Roost D. Long-term deep brain stimulation for refractory temporal lobe epilepsy. Epilepsia. 2005;46(Suppl 5):98-99.

98. Kossoff EH, Ritzl EK, Politsky JM, et al. Effect of an external responsive neurostimulator on seizures and electrographic discharges during subdural electrode monitoring. Epilepsia. 2004;45(12):1560-1567.

99. Fountas KN, Smith JR, Murro AM, Politsky J, Park YD, Jenkins PD. Implantation of a closed-loop stimulation in the management of medically refractory focal epilepsy: a technical note. Stereotact Funct Neurosurg. 2005;83(4):153-158.

100. Worrell G, Wharen R, Goodman R, et al. Safety and evidence for efficacy of an implantable responsive neurostimulator (RNS [R]) for the treatment of medically intractable partial onset epilepsy in adults. Paper presented at: Annual Meeting of the American Epilepsy Society; December, 2005.

101. Wada JA, Tsuchimochi H. Cingulate kindling in Senegalese baboons, Papio papio. Epilepsia. 1995;36(11):1142-1151.

102. DeGiorgio CM, Shewmon DA, Whitehurst T. Trigeminal nerve stimulation for epilepsy. Neurology. 2003;61(3):421-422.

103. Fanselow EE, Reid AP, Nicolelis MA. Reduction of pentylenetetrazole-induced seizure activity in awake rats by seizure-triggered trigeminal nerve stimulation. J Neurosci. 2000;20(21):8160-8168.

104. Yang XF, Duffy DW, Morley RE, Rothman SM. Neocortical seizure termination by focal cooling: temperature dependence and automated seizure detection. Epilepsia. 2002;43(3):240-245.

105. Bikson M, Lian J, Hahn PJ, Stacey WC, Sciortino C, Durand DM. Suppression of epileptiform activity by high frequency sinusoidal fields in rat hippocampal slices. J Physiol. 2001;531(Pt 1):181-191.

106. Gluckman BJ, Nguyen H, Weinstein SL, Schiff SJ. Adaptive electric field control of epileptic seizures. J Neurosci. 2001;21(2):590-600.

107. Engel J, editor. Surgical Treatment of the Epilepsies, 1st, New York: Raven Press, 1987. No. 1

108. Worrell GA, Cranstoun SD, Echauz J, Litt B. Evidence for self-organized criticality in human epileptic hippocampus. NeuroReport. 2002;13(16):2017-2021.