Imagine an oncologist at a regional hospital opening a “FHIR-enabled” electronic health record (EHR) expecting to see her new patient’s past lab results and allergy history. Instead of a neatly formatted summary, she finds half-filled CSV files and scanned PDFs. She picks up the phone and faxes a request to the referring clinic. As she waits on hold, her colleague jokes that all the money spent on modern interoperability still hasn’t replaced the fax machine. It’s a scene repeated across countless clinics despite the fanfare around the Fast Healthcare Interoperability Resources (FHIR) standard. Nearly all certified EHR vendors now support FHIR to some degree, but external patient records often arrive late, buried in unreadable formats, or stripped of context. Clinicians still re-enter medications by hand and chase records via phone calls because health-information exchange is rarely seamless.

This disconnect between promise and reality is the heart of today’s interoperability crisis. FHIR introduced web-style APIs for health data in 2012 and is mandatory for U.S. Certified Health IT. Adoption is increasing, yet meaningful interoperability remains elusive. The reasons are complex—spanning technical gaps, business incentives, workflow misalignment and regulatory fragmentation. In this article, we explore why healthcare interoperability continues to falter despite FHIR’s growing footprint and what must change to realize its potential.

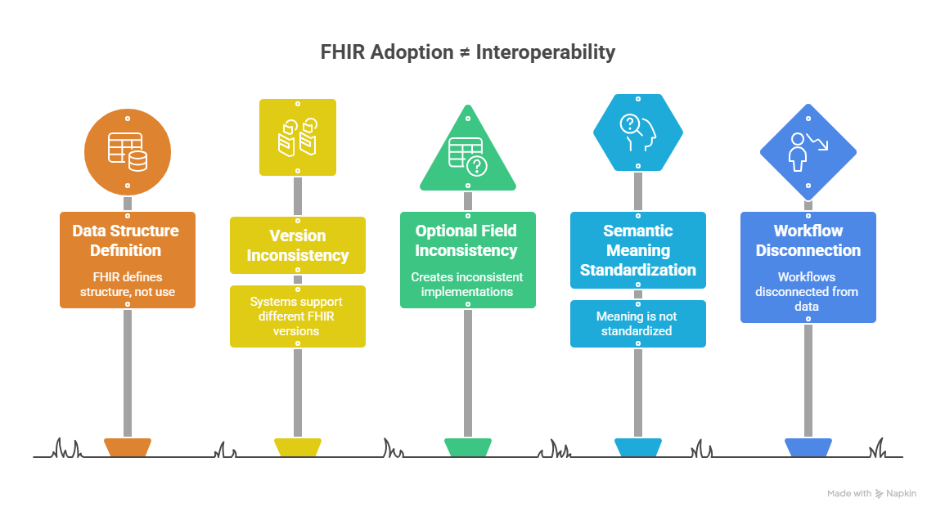

The illusion of standards: FHIR ≠ standardization

FHIR defines how data is structured, not how it is used

FHIR was designed to create a common language for health data exchange, but “common” isn’t always “consistent.” Industry observers note that FHIR isn’t implemented the same way everywhere; vendors support different versions (DSTU2, STU3, R4, R5) simultaneously, and even within a single vendor, resources vary in their capabilities. Some major EHR platforms support FHIR R4 but may restrict certain interactions for specific resources, while other vendors still expose older versions of the standard. Some implementations only allow read-only access, limiting the usefulness of FHIR APIs in real clinical workflows. This fragmentation forces healthcare organizations to build custom mappings, use middleware, or limit interoperability projects to narrow use cases. One study found that only 51 percent of data elements needed for clinical trials were fully supported by FHIR, forcing teams to build custom data transformations.

Semantic variation further undermines the promise of FHIR. FHIR exposes structured resources, but the meaning of those fields isn’t always preserved across systems. A review of ICD-10 diagnoses found that only 54 percent of codes entered by clinicians were appropriate to the clinical scenario, and 26 percent were completely missing. When medication orders or lab tests flow through multiple systems, differences in naming conventions, units and value sets lead to wrong-dose or wrong-drug errors. Health Information Exchanges report that nearly half of incoming lab results rely on local proprietary codes; up to 19 percent of these tests cannot be mapped to national standards like LOINC. These semantic mismatches mean that even technically compatible FHIR APIs don’t guarantee trustworthy data.

Technical and resource barriers

- Skills gap and complexity – Implementing FHIR isn’t a simple “flip the switch” upgrade. It demands a blend of technical architecture knowledge, understanding of clinical workflows, and expertise in data security. A recent guide lists the lack of in-house FHIR expertise as the single biggest barrier to adoption. FHIR’s resource-based model introduces numerous interdependencies and a steep learning curve. Smaller providers with limited IT teams often rely on vendors for integration work, making sustainable in-house expertise even harder to develop.

- Cost concerns – Upgrading infrastructure, training teams, and integrating FHIR into legacy EHRs are expensive undertakings. Many organizations struggle to justify the investment without clear short-term returns. A recent publication notes that converting existing HL7 interfaces to FHIR can be prohibitively costly, especially when current systems still “work”. Providers operate on thin margins; they invest in revenue-cycle optimization before interoperability because the financial benefits of data sharing accrue to payers more than providers.

- Legacy system integration – Modern interoperability standards must coexist with legacy systems-built decades ago. Many healthcare systems run on older platforms never designed for FHIR. Making these systems FHIR-capable requires middleware, custom APIs or even complete platform replacements, all of which are disruptive and costly. Another article echoes this point: technical complexity in integrating FHIR with legacy systems and disparate vendor platforms is one of the primary barriers to adoption.

- Security and compliance hurdles – Handling sensitive patient data necessitates robust security measures. FHIR projects must implement OAuth 2.0 authentication, SMART on FHIR authorization, encryption, and audit logging from day one. Smaller clinics or resource-constrained organizations may not have the expertise or budgets to implement these controls effectively. Compliance concerns around HIPAA and a patchwork of state privacy laws further slow adoption.

Organizational and workflow barriers

Misalignment with clinician workflow

Interoperability requires more than technical connectivity—it must fit into clinicians’ daily routines. Surveys indicate that only 44 percent of clinicians feel their systems integrate outside information as expected, and just 42 percent routinely use external data. Poor data usability leads to alert fatigue; between 54 and 91 percent of clinical decision-support alerts are ignored because they’re duplicative or irrelevant. In a review of over 100 million clinical notes, half the content was duplicated, creating bloated records that slow down care. Without alignment between interoperability tools and clinical workflow, even well-implemented APIs go unused.

Manual workarounds persist

When systems don’t deliver, clinicians revert to manual workarounds. As of 2021, seventy percent of hospitals still used fax machines to exchange records. In rural and smaller hospitals, nearly half rely primarily on fax or mail to respond to electronic requests for patient information. Real-time data integration remains patchy: 38 percent of hospitals cannot count on external data at the point of care. Medication reconciliation still involves manual review because fragmented sources and poor matching persist.

Consent and privacy fragmentation

Even when technical connections exist, fragmented consent models can stall data sharing. In the United States, only seven states follow an opt-in model for sharing health records; fifteen default to opt-out, while the rest have hybrid or unclear policies. Sensitive data like substance-use treatment or reproductive health records carry additional restrictions. Patients increasingly expect granular control over their data—by type, time or recipient—but most systems can’t manage dynamic consent preferences. Without built-in consent logic, organizations either over-block critical information or overshare sensitive data, undermining patient trust and risking legal exposure.

Business incentives and vendor lock-in

Interoperability is often hindered by business considerations rather than technical ones. Some EHR vendors charge steep fees—up to US$15 000 per year—to access APIs. Others limit API functionality (e.g., read-only access or throttled request limits), undermining the potential of even the best-designed solutions. Forty percent of digital health companies report they cannot get the data they need from certified systems. Meanwhile, nearly two-thirds of interoperability initiatives fail to meet return-on-investment expectations because the financial benefits accrue to different stakeholders than those paying for the upgrades. Regulatory certification sets a baseline, but over 60 percent of certified vendors skip or underperform on crucial usability tests, highlighting a gap between compliance and real-world effectiveness.

Complexity of FHIR itself

Beyond inconsistent implementations, FHIR’s design introduces its own challenges. One analysis argues that widespread adoption has been sluggish because most data exchange still relies on flat files and basic HL7 messaging. Converting existing HL7 interfaces to FHIR can be prohibitively expensive. FHIR’s resource-based model is more detailed and complex than its predecessor HL7 v2: each resource has numerous elements and can reference other resources, creating webs of interdependencies. Internal teams used to the simplicity of HL7 often feel overwhelmed; the steep learning curve increases development time, requires more sophisticated testing, and demands deeper coding skills. Without in-house expertise, organizations become dependent on consultants or vendor-specific solutions, which perpetuates the very vendor lock-in FHIR sought to overcome.

A path forward: aligning people, processes and technology

Despite these hurdles, FHIR remains the most promising standard for unifying healthcare data. It simply can’t succeed alone. Achieving true interoperability requires a multi-pronged strategy that addresses technical, organizational and regulatory challenges:

- Invest in education and workforce development – Healthcare leaders must cultivate in-house expertise in FHIR, data modelling and security. Universities and professional societies should offer training programs that combine technical skills with clinical context. Partnerships between hospitals, vendors and academia can accelerate knowledge transfer and reduce the skills gap.

- Adopt and enforce consistent implementation guides – National and regional bodies should publish detailed implementation guides and conformance tests to reduce variation in FHIR profiles. Initiatives like the Trusted Exchange Framework and Common Agreement (TEFCA) provide a governance model for data exchange; aligning with such frameworks fosters consistency. Healthcare organizations should adopt open standards (FHIR, SNOMED CT, LOINC) and avoid vendor-specific extensions that fragment interoperability.

- Use integration platforms and cloud-based architectures – Instead of building bespoke connections between every system, organizations can use Health Information Exchanges or integration platforms that normalize data across formats. Cloud-based solutions support scalable, secure and real-time access to critical health data. They also reduce on-site infrastructure costs, helping smaller providers overcome resource barriers.

- Prioritize data governance and consent management – Robust data governance isn’t optional. Organizations must establish clear policies around data access, usage rights, and consent management. Emerging models such as patient-controlled identity and dynamic consent frameworks can empower patients while ensuring compliance. Aligning state laws and federal guidance can reduce the uncertainty that stalls data sharing.

- Align incentives and accountability – Policymakers should tie reimbursement and certification not only to technical capability but also to outcomes. Penalties for information blocking must be enforced consistently, and usability tests should be mandatory for certification. Value-based care models can help align ROI across providers and payers, encouraging investment in interoperability.

- Build interoperability into workflow design – Technology should adapt to clinicians’ needs, not the other way around. Systems must surface external data at the right point in the workflow, summarize it intelligently, and reduce alert fatigue. Cross-functional teams, including clinicians, informaticians, and UX designers, should co-design interfaces to ensure that interoperability improvements translate into better care.

Conclusion – connecting the dots

FHIR has fundamentally changed how we think about health-data exchange. It has catalyzed API-driven innovation and enabled patient-facing apps, yet it hasn’t magically solved interoperability. Skills gaps, legacy systems, semantic mismatches, vendor lock-in, workflow friction, and fragmented consent laws continue to frustrate clinicians and IT teams. Interoperability is as much about governance, incentives, and cultural change as it is about technology. To move from fragmented records to seamless data flows, organizations must embrace open standards while investing in training, integration platforms, robust data governance, and aligned incentives.

When those pieces come together, FHIR can live up to its promise—not just as a technical specification, but as a foundation for coordinated, patient-centered care. The next step is to translate lessons learned into action and invest in healthcare interoperability solutions that turn standards into real-world performance. Only then will clinicians open a patient’s record and find the information they need, when they need it.